Energy production requires a fundamental understanding of where resources are located, the extent or volumes of the hydrocarbons in place and how to exploit or extract them cost-effectively and safely. Operating companies have deployed significant capital over the last decade to collect massive quantities of real-time sensor data; however, particularly given current commodity prices, they are still seeking ways to modernize their analysis techniques and thus realize the return on investment from their data sources.

To accomplish this mission-critical objective, these organizations need a platform to rapidly integrate all field data, including cores, well logs, completion designs, real-time production data, maintenance records and financial constraints. Additionally, they require software capable of flexible descriptive analytics to visualize and contextualize this deluge of data.

However, such applications should not only integrate and visualize the different data sources but should also provide predictive analytics to allow the various engineers to quickly predict the production responses to stimulation, mechanical equipment failure, fieldwide forecasts for changing injection redistribution and capacity, infill drilling and other critical decisions.

Essentially, to transform data into dollars, an operating company needs a prescriptive analytics tool to explore the millions of possible what-if scenarios to identify the optimal operational and development plans.

Data Physics, a quantitative optimization framework, has been validated with eight operating companies across 14 EOR projects using data from more than 10,000 wells to enable operators to increase production by greater than 20% and/or reduce their operating expenses by more than 40%.

Decisions to optimize

As producers strive to efficiently manage capital in EOR projects, petrotechnical experts grapple with missioncritical decisions that determine the profitability of operations. In maturing reservoirs undergoing waterflooding, CO2 flooding, chemical flooding or steamflooding, success hinges on a number of decisions with impacts on varying time frames.

• Short term

- Which wells to stimulate and when;

- What cyclic steam volumes to pump and how to establish prioritized schedules in steamfloods; and

- How to adjust injection pressures to achieve target injection volumes.

• Medium term

- Areal redistribution of injectant on a pattern-by- pattern basis;

- Vertical redistribution of injectant within multizoned reservoirs; and

- How to adjust injection plans based on facilities constraints.

• Long term

- 1. When and how much to reduce/increase injection capacity;

- 2. Where to drill infill wells; and

- 3. How to design pattern shapes and sizes.

Limitations of existing physics-based modeling tools

For crucial decisions operators typically rely on semi-quantitative approaches such as decline curve analysis or simple analytical models. These methods are capable of using only a small portion of available data and function as rules of thumb, resulting in qualitatively optimized reservoir management decisions at best.

On the other extreme, major oil companies leverage sophisticated predictive modeling. Reservoir simulation is the most advanced technique available in that it is capable of integrating disparate data sources and predicting over long time horizons.

While reservoir simulation is an excellent tool for field studies and long-term planning, certain limitations prevent operators from leveraging simulation for day-to-day decision-making. These limitations include:

- Reliance on geomodeling workflows that require months to years of manual setup;

- Hours/days required to run a single scenario due to computational complexity;

- Impracticality of obtaining optimal solutions given a limited set of scenarios; and

- Sequential data integration that results in inconsistencies and failure to honor all the data.

In essence, reservoir simulation enables operators to precisely model the physics of a small number of scenarios; however, it is not designed to produce thousands or millions of scenarios and to automatically update those scenarios based on real-time data. Even for operators with the world’s most sophisticated simulations, there is a need for faster models to achieve prescriptive analytics and leverage real-time data.

Limitations of existing data-driven modeling tools

Purely data-driven models lie on the opposite end of the spectrum compared to physics-based reservoir simulation. While simulation models require months to set up and days to run, machine learning models can be built in days and run across a full field in real time to rapidly explore thousands of scenarios and identify optimal solutions. Although such models offer a significant speed advantage and enable predictive analytics, in domains such as unconventionals where the reservoir physics are relatively more difficult to model, the absence of underlying physics prevents machine learning’s use for quantitative optimization. The most notable challenges to quantitative optimization based on data-driven approaches include:

- Models only accurately predicting already-measured responses in the reservoir;

- Susceptibility to significant prediction errors due to data quality issues;

- Inability to predict drilling responses given the absence of data at new locations; and

- Poor predictability over longer time horizons and changing reservoir conditions.

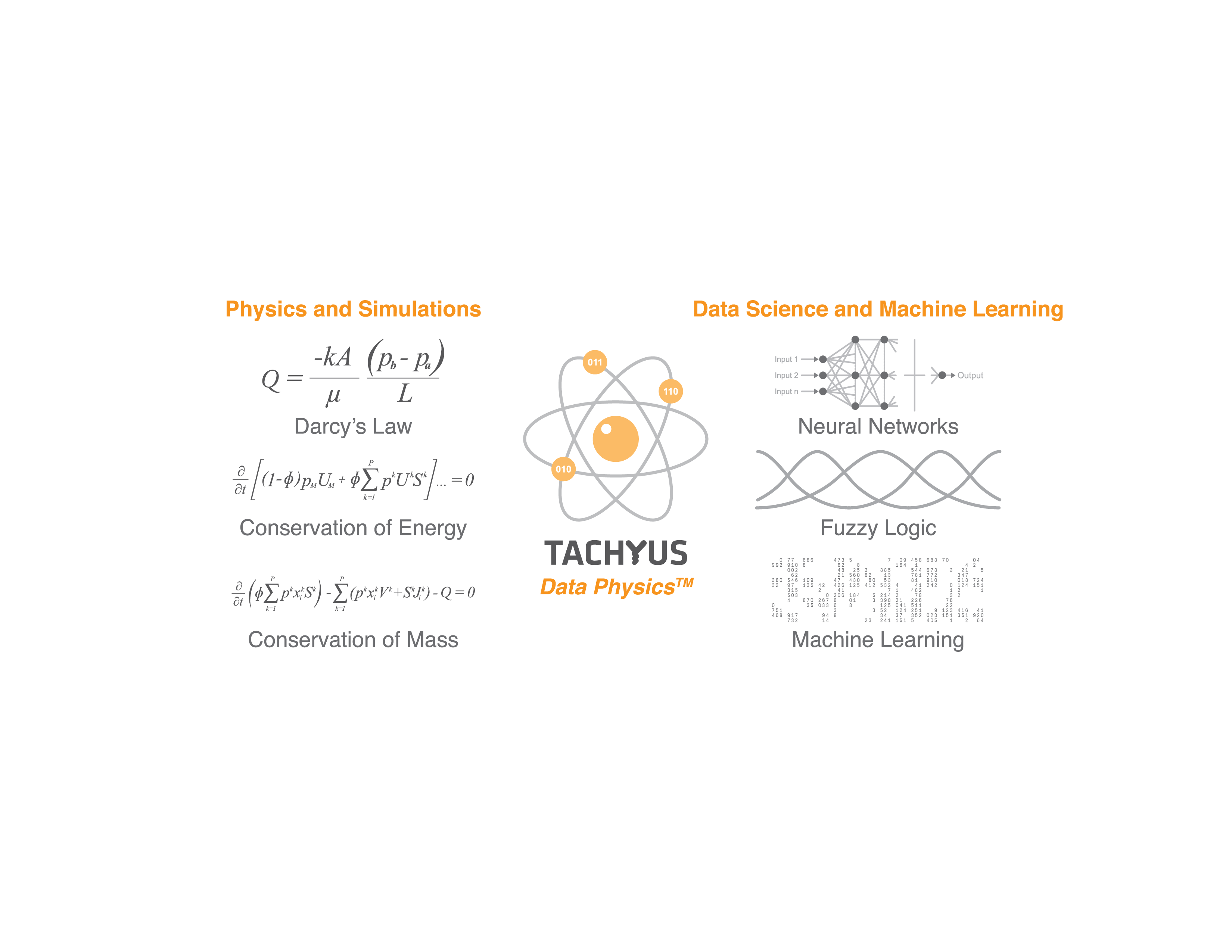

Physics-based reservoir simulation and data-driven machine learning offer complementary strengths. An ideal predictive model would combine the speed and flexibility of machine learning with the predictive accuracy of reservoir simulation so that operators could integrate data in real time to quantitatively optimize key reservoir decisions continuously.

Merging physics, data

Data Physics merges modern data science and the physics of reservoir simulation. Like machine-learning models, Data Physics models require only days to set up and can be run in real time. Additionally, because the models include all the same physics as a reservoir simulation, they offer excellent long-term predictive capacity even when historical data are sparse or missing.

Data Physics models integrate production data and well log data in a single assimilation step, unlike traditional sequential reservoir simulation workflows.

Data assimilation is automatic and leverages sophisticated algorithms, requiring minimal human intervention. These models directly incorporate raw data such as log responses without the need for manual interpretation. Data Physics models can therefore be rapidly built and continuously updated while assimilating various forms of data without inconsistencies.

Closed-loop optimization

After fitting historical data and validating predictive capacity, Data Physics models can be used to quantitatively optimize any future performance indicator such as short-term cash flow, net present value or ultimate recovery. Closed-loop optimization becomes possible due to the speed of Data Physics models.

Full steamflood optimization, for example, consists of a combination of pattern design, areal steam redistribution, vertical steam redistribution, selection of total steam capacity and infill drilling location selection. Focusing solely on steam capacity optimization, Figure 2 shows sample optimized and nonoptimized injection scenarios. The operator is not yet maximizing production or minimizing injection.

FIGURE 2. This chart shows the benefits of a prescriptive analytical model. The Pareto front describes the optimal solutions, allowing engineers to make operational decisions that have the most significant financial impact for any specific input constraint. (Source: Tachyus)

Depending on the operator’s priorities and risk tolerance, there are several optimal solutions. For example, steam injection can decrease almost 40% while maintaining oil production, or oil production can increase 20% while maintaining injection.

By harnessing the power of data with sophisticated modeling tools like Data Physics, producers are realizing quantifiable improvements in recovery.