(Source: Hart Energy; Shutterstock.com)

With capital tight and income stagnant, producers are increasingly looking to automation to boost efficiencies and reduce expenditures. But those goals are not automatically accomplished by buying some sensors, off-the-shelf SCADA software and an extra server.

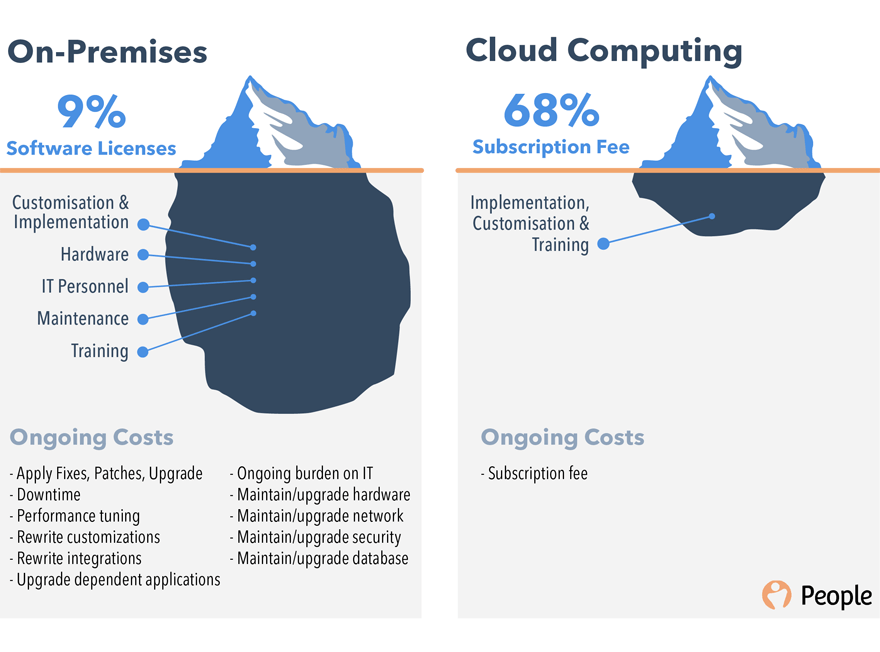

In fact, in-house automation systems are very expensive compared to third-party, cloud-based versions in a number of ways, many of which are not obvious. This article addresses four areas in which outsourcing can be more efficient, including some costs of in-house systems that are not always included in the decision-making process.

Time to implement

Time is money, and for a company leaning on automation for cost savings, every day’s delay in implementation can cost thousands of dollars. But delays extending to months or years are common for in-house systems.

It begins with months of researching the almost endless options in sensors, communication devices for data relay, data hosting, software and many other seemingly small but vital puzzle pieces. All this time, not only are automation’s cost savings delayed, there are additional costs in payroll hours of the department responsible for the research.

In the worst-case scenario, wrong decisions are made, and something doesn’t work right, or at all, which delays the procedure even more—and could generate cost overruns if the “wrong” equipment can’t be returned or traded in.

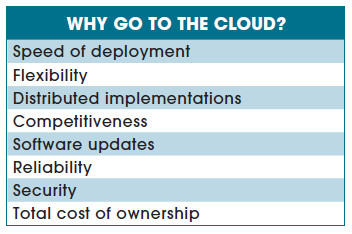

With a cloud option, leading suppliers have already evaluated the options in equipment and software, so they only need to learn about the client’s needs—then they can plug in the appropriate components in no more than a few days or weeks—and the producer’s bottom line improves on that same schedule.

The cloud automation company will apply data from hundreds of installations and thousands of wells in dozens of situations to correctly equip and right-size the installation. Using the philosophy of not too much, not too little, but just right for each situation, everything is likely to be smoother. And should a mistake happen, they’re equipped to quickly recognize the problem and make adjustments on the fly.

Capex

Depending on the size of the company’s holdings, costs for the SCADA platform, implementation and infrastructure can be very costly. Costs for field hardware will remain, but there are significant savings in the other areas—which is very welcome in today’s tight equity markets.

In the cloud option, under the automation-as-a-service model, expanded from software-as-a-service, the SCADA firm covers all necessary software in return for a monthly fee to cover those costs.

Ongoing expenses

Perhaps the highest and most often overlooked cost is not one-time—it’s forever.

That would be payroll for all the required personnel. Expanding the in-office IT staff is not the high cost—depending on the number and size of offices, maybe only a very few. The real challenge is in the field, where the harsh conditions and broad expanses require more people. Even a few field issues can require a lot of payroll hours for repairs and replacement.

Training all involved personnel is also on this list, because it’s not a one-and-done situation. IT training at startup is a significant cost, often days or weeks in itself, but regular upgrades require IT personnel to be part of regular training.

With tech advances coming faster and faster, no hardware or software lasts forever. Upgrades of both can be costly and time-consuming for the aforementioned IT personnel—and it may not always go smoothly if all components do not immediately match up.

Cloud services greatly reduce payroll because service personnel are shared with other clients, so each company is only paying for the time it needs. Those service people are trained as needed by the provider.

For more than a decade, the trend for general use software has been toward “evergreen,” or residing on the cloud instead of on individual computers. This way software upgrades and fixes come along as needed, in easily digested bites. Formal retraining is rarely needed.

The cloud company also handles hardware updates seamlessly, as appropriate, normally at no cost to the client. The provider has already tested all components, hard and soft, to verify compatibility.

Data security

Perhaps the biggest myth about in-house versus third party, cloud-based systems involves which stores data more securely. Many SCADA providers are asked this question first, because producers are concerned about their data being on someone else’s server in some remote location, seemingly out of their control.

There are two main aspects to data security—data breaches and data loss. Hacking, or security breaches, are the main concern for clients. But data loss due to power surges, fires, or simply a disk crash are just as important—and they are much more of a problem for single location onsite hosting than for the cloud.

For self-hosted systems, the cost of hacking protection involves continually monitoring and upgrading security software and systems. Those updates are usually subscription-based and applied automatically.

Most data breaches, however, come from employees opening phishing emails or even entering account information through them. Onsite systems rarely have firewalls strong enough to prevent this. Whatever the system, good employee security training is always in order.

Data preservation, on the other hand, can be much more precarious. In the past, it was not unusual for SCADA users to store their SCADA data on a single computer under an employee’s desk. Two ounces of spilled coffee could have crashed an entire database. And if it were not backed up, that would be a permanent—and devastating—loss.

Regular onsite backups, which are more likely to be done if automated, do help in case of a single server failure. But they are no help in case of a fire, tornado or other destructive incident.

In cloud-based systems, for security, the provider employs their expertise in using antivirus software and malware, constantly monitoring for new threats. All this is invisible to the client.

Data are redundantly and automatically backed up on multiple servers in divergent locations. So if there is a power surge or a fire at the client’s office, no data are stored there anyway, so as soon as those local computers are back up and running, all company data are ready for access.

Conclusion

The era of tight investment money has shown producers the economies of outsourcing more and more services, and because of its complexity and cost, automation is particularly appropriate for this.

In addition to the above advantages, data on the cloud is accessible 24/7 around the globe, wherever there is internet access. Distant offices can collaborate, field personnel can review well data on site and information flows seamlessly across departments.

Recommended Reading

TGS, SLB to Conduct Engagement Phase 5 in GoM

2024-02-05 - TGS and SLB’s seventh program within the joint venture involves the acquisition of 157 Outer Continental Shelf blocks.

2023-2025 Subsea Tieback Round-Up

2024-02-06 - Here's a look at subsea tieback projects across the globe. The first in a two-part series, this report highlights some of the subsea tiebacks scheduled to be online by 2025.

StimStixx, Hunting Titan Partner on Well Perforation, Acidizing

2024-02-07 - The strategic partnership between StimStixx Technologies and Hunting Titan will increase well treatments and reduce costs, the companies said.

Tech Trends: Autonomous Drone Aims to Disrupt Subsea Inspection

2024-01-30 - The partners in the project are working to usher in a new era of inspection efficiencies.

Drilling Tech Rides a Wave

2024-01-30 - Can new designs, automation and aerospace inspiration boost drilling results?