Reservoir software, from seismic interpretation through petrophysics, reservoir characterization, modeling and dynamic fluid simulation, has evolved considerably since the 1980s. Geoscientists and engineers have adopted a mix of platform software suites and specialized software tools—the former to handle the workflow from inception to final results and the latter to access specialized capabilities. Users were burdened with the back-and-forth movement of data using simplistic export/import files such as LAS, character-based grids or one-on-one connection utilities. Such transfers and the necessary verifications and corrections around the incoming data are time-consuming, so asset teams have typically limited the mix of software solutions to retain control of the process and avoid a resource drain.

Two challenges have emerged. The first is cloud computing and the migration of applications to the cloud as well as the shift from monolithic application suites to smaller software applications based on the micro-services concept. The second trend is market diversification, with the emergence of a number of small, specialized E&P software companies as well as artifi cial intelligence, machine learning and other data analytics solutions.

For more than 20 years, the industry has been working on a better integration of different reservoir and subsurface software, starting with the 1990s RESCUE data exchange initiative. More recently, the development of RESQML, within the industry data standards consortium Energistics showed substantial gains in scope and maturity starting in 2009.

A mature standard

The RESQML standard is published and usable without fees. However, adoption on the sole technical merits of a standard can take time, so a number of software development companies participating in the special interest group overseeing RESQML organized a live demonstration of a workflow using one each of their software products. This pilot involved six software entities, supported by a Gulf of Mexico operator and its partner, which made a whole field dataset (seismic, wells and grids) available for the pilot. This was designed to be the first multiclient, multi-application, multicloud live demonstration covering subsurface modeling workflows.

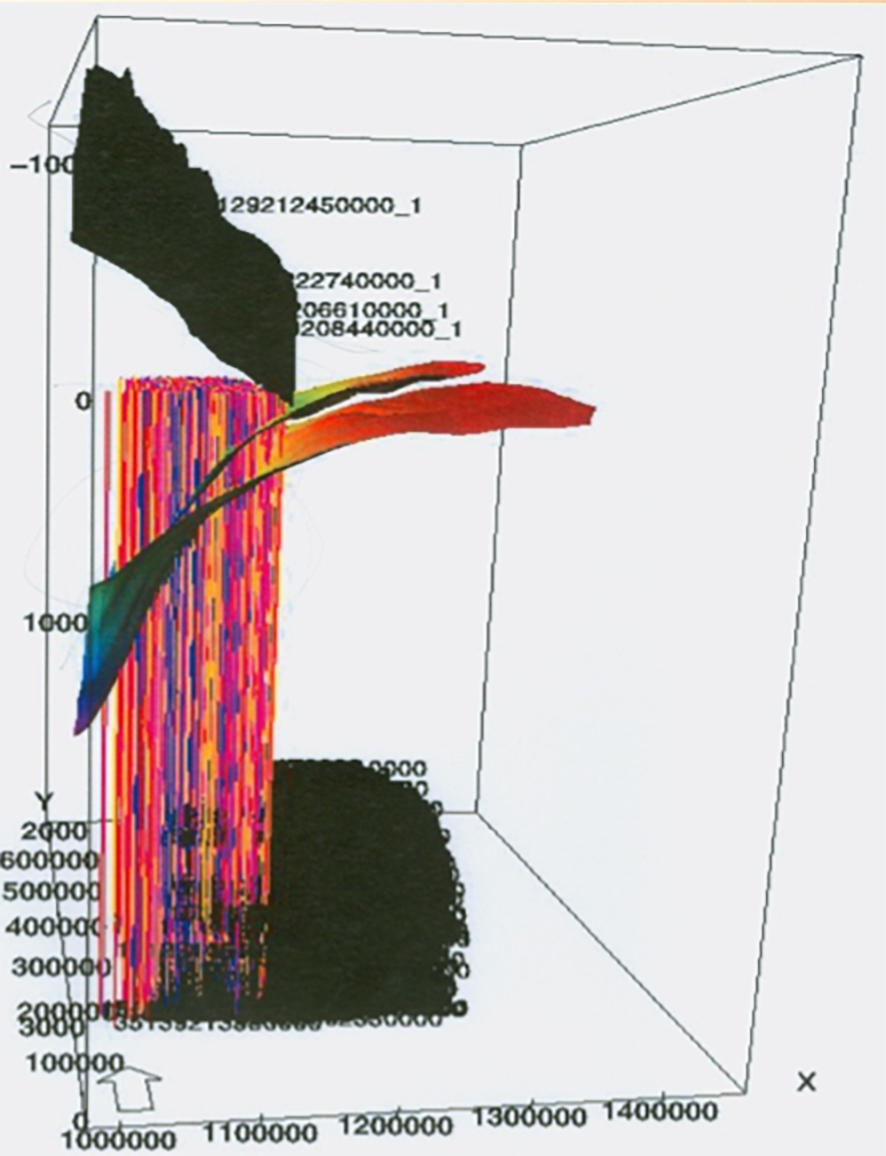

Figure 1 shows flaws associated with a data transfer lacking proper reference and cross-operating system compatibility. The surface grids are correct, but the well data lacks a depth reference, and the cube is upside down due to a point of origin discrepancy. Finally, some data are incorrectly scaled due to different binary data representations between Windows and Linux. A data exchange standard is a single comprehensive self-describing format (it can be accessed independently of any software product). It also is independent of operating system specificities, exposes data schemas within the format and provides identifiers so that data are always tied to the right geoscience or engineering objects. Modern data exchange standards are also rich in metadata, ensuring recipients of the data can correctly position and scale the data.

Demonstrating operability of a data exchange standard

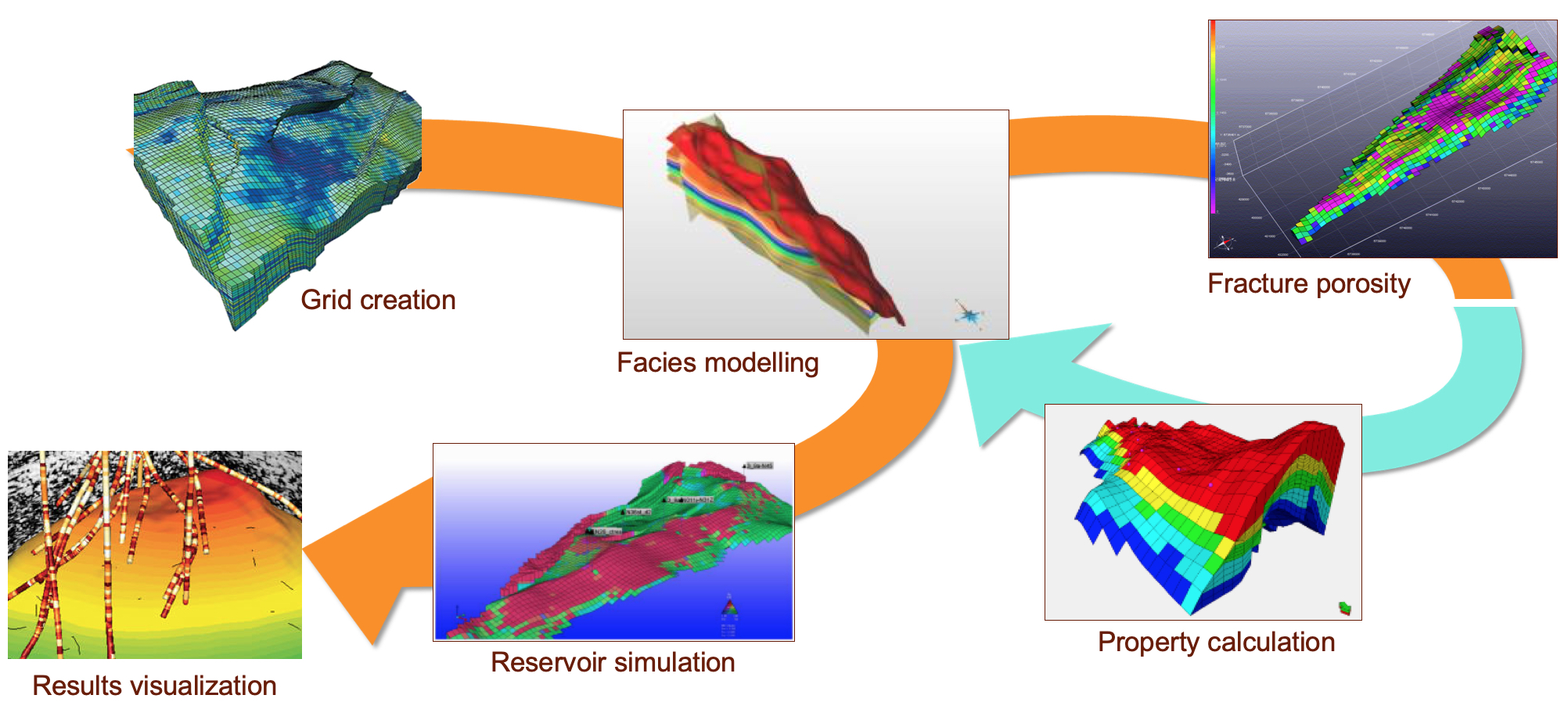

The six selected software platforms each offer a wide range of functionalities; however, for the purpose of demonstrating transfers of the subsurface model and its data, each software was used to perform only a few steps in the workflow (Figure 2).

For each of these steps, data were exported in the form of a RESQML file, and the next step would start by reading data from the RESQML file. All the software and data were located in the cloud using two different providers. The overall demonstration, performed in front of an audience at a major trade show in 2018, involved specialists from each software company operating their respective products. The time to perform the overall workflow, including import and export of the data at each step, was less than 45 minutes.

Impact on innovation

The use of standards makes existing workflows and multivendor portfolios more efficient. However, a greater benefit is the increased access to innovation. Startups, academic ventures and internal software developments all share the same predicament in a multiple vendor IT ecosystem: to integrate their innovations into a production environment, considerable time and resources are needed to build and maintain software bridges to each system.

An industrywide data exchange standard removes this barrier and makes it quicker, more reliable and more efficient to diversify the sourcing of technology tools, as long as they comply with the standard. The focus becomes the pursuit of innovation in solving E&P problems and the speed at which innovations are deployed to operational business units.

The oil and gas industry was late in adopting the migration of software and data to the cloud. The cloud significantly changes the dynamics of scientific and engineering software; data become a pooled asset with standardized access utilities that support a broad portfolio of specialized tools.

The demonstration followed a pre-established flow from one software product to the next.

However, it would be easy to add other compliant applications to perform additional steps, substitute one product with another, or skip some steps entirely as users see fit. With write (output) and read (input) steps that define data movement in respect to a single standardized format, there is no established sequence of usage of these various technology tools.

Data in the context of subsurface studies and reservoir modeling are in constant flux. There is practically never a final model. New wells are drilled either to access new production or as injection wells to stimulate production, and each new well provides new data. Time lapse seismic updates the reservoir fluid distribution maps, prompting new activities to match new information with modeling results. The data exchange standard can play the role of an evergreen, vendor-neutral long-term repository of the dataset whole asset life cycle, “future-proofing” the data for reuse.

Conclusions

Data exchange standards will become increasingly important as the oil and gas industry increases its reliance on data to drive its business. The advent of cloud and microservices will have a dramatic impact on the topology of geoscience and engineering software and the way users will interact with technology. Successful digital transformation will require a concerted drive to standardize the format and the content of data exchange processes. The RESQML Special Interest Group has delivered an operational standard and demonstrated its practical use in a full-scale demonstration using a complete dataset and a diverse set of software tools.

Acknowledgements: The data were provided by BP and Shell. Staff and software from Emerson E&P Software, IFP-Beicip, Schlumberger Information Systems, Computer Modelling Group and Dynamic Graphics Inc. contributed to the demonstration, which ran on Amazon Web Services and Google Cloud resources.